Artificial Intelligence: 6 Things You Need To Know

SHARE

John Crossley

19 Nov 2015

OK, so I’m a little bit obsessed with Artificial Intelligence right now. After reading (and writing) quite a bit on this recently I wanted to distil some thoughts and findings.

Here’s the thing. This technology if it reaches its full potential could be more intelligent than humans, resulting in society-defining changes to our world.

Right now, there’s been heaps of media coverage dedicated to the impact that robotics and automation will have on the workforce. The Bank of England said this month 15 million jobs in Britain are at risk as a result.

The debate is certainly heating up, but many still question if a true Artificial Intelligence will ever be achieved. Here’s one of those listicle things to explain what’s what.

1) Low level AI is everywhere right now

(image: Mashable)

Artificial Narrow Intelligence (ANI) or Weak AI is where we are now and it’s absolutely everywhere. Sasspants Siri is Weak AI, as is Google Maps, as is the genius chess computer that you never beat.

Weak ANI is great at excelling at one function or task, but it can’t ‘think’ in the same way as your brain or mine. Generally, it’s making our lives easier and as the Internet of Things becomes more prevalent (with the tidal wave of data this brings) this is only going to increase.

2) A supercomputer with a bigger brain than yours will be here in less than a decade

(image: aboutmodafinil.com)

If Moore’s Law holds true (and computing power keeps doubling every two years) we’ll have a supercomputer with the same processing power as the human brain by the year 2025. That’s an awful lot of juice. But…

3) AIs can’t do ‘routine intelligence’, yet

(image: Giphy)

Programming that brain to have the same level of ‘intelligence’ as a human is much harder. AIs are great at searching through data, and make mind-numbingly large calculations, but they’re not so great when it comes to simple tasks that we all do on autopilot, like walking. Look at these stupid robots falling over, and try not to laugh.

If the boffins are able to tackle this ‘routine intelligence’ issue that as walking, breathing, humans we take for granted, then we’ll be sure to reach the level of Artificial General Intelligence – or Strong AI. When that happens is another matter, with some experts saying it’s just around the corner, while others say it won’t be until 2050, or even not at all.

4) An intelligence explosion will change everything

(image: 33rd Square)

Now this is when it gets scary. So those robots look silly, but the rate which they’ll go from looking ridiculous to almost god-like, could be pretty quick. This is known as the ‘intelligence explosion’.

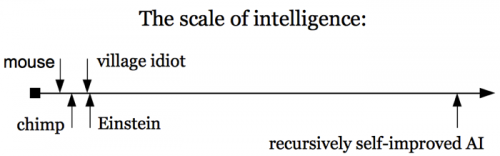

How ‘smart’ this machine will be is a bit too much for our puny brains to comprehend. To us, the intelligence gap between the stupidest person you’ve ever met and Einstein is huge. To AIs? Tiny. And if AIs are getting smarter at an exponential rate, we’re very quickly going to be knocked over.

Just think about how useful Weak AI is to us right now. Strong AI, interacting with all those billions of devices connected to the internet would be something else.

5) An Artificial Super Intelligence would be god-like to us

(image: psychesingularity)

If you were to draw the intelligence explosion on a graph, things go pretty vertical looking. We could go from an AGI to an Artificial Super Intelligence (ASI) at a blistering speed.

The difference between our brains and an ASI is like putting Einstein up against a god. It’s mindboggling. Relativity would be breakfast. Impossible calculations for our minds (like what lies at the heart of a black hole, for example) ASIs would brush aside. World hunger could be solved. Intergalactic space travel would be sorted. We could even conquer our own mortality. AI would be the last invention we ever need.

6) But it could go the other way, quite horribly

(image: Giphy)

For the sake of balance, it’s worth pointing this out. Things could go, really, really wrong – think a bit closer to the dystopian nightmare imagined in Hollywood that we’re all familiar with. Actual, proper, scientists a lot smarter than me are really worried – like Stephen Hawking, for instance.

But it won’t be ‘unfriendly’ – it will simply be amoral and obsessed with fulfilling its programmed goal, but that could also spell disaster. For instance, if it’s programmed to make the most perfect drawing pin with as much skill as it can learn, if humans are the way of creating that ubiquitous piece of office stationery…they have to go.

I’ll finish on an even cheerier note. The ASI could create an army of tiny nanorobots that could multiply exponentially. A grey goo will consume all matter in the universe and convert it into drawing pins. Humans create ASI – universe is converted into nothing but drawing pins. The end.

So, in one hand we could conquer the universe and, in the other we could destroy it. With technology advancing at such a pace AI is a very real possibility in our lifetimes.

We’re right to be debating the impact of automation, but the discussion needs to be much broader. What rules do we need to govern AI? And who’s responsible for driving this forward?

We need to get our house in order, or before we know what’s happened there’s a chance we’ll all be turned into floating stationery in space.