Super-human AI is coming (and it might not even destroy mankind)

SHARE

Jack Simpson

06 Oct 2016

Developing super-human machine intelligence without putting the right controls in place would be like chimps creating people and expecting them to do as they’re told.

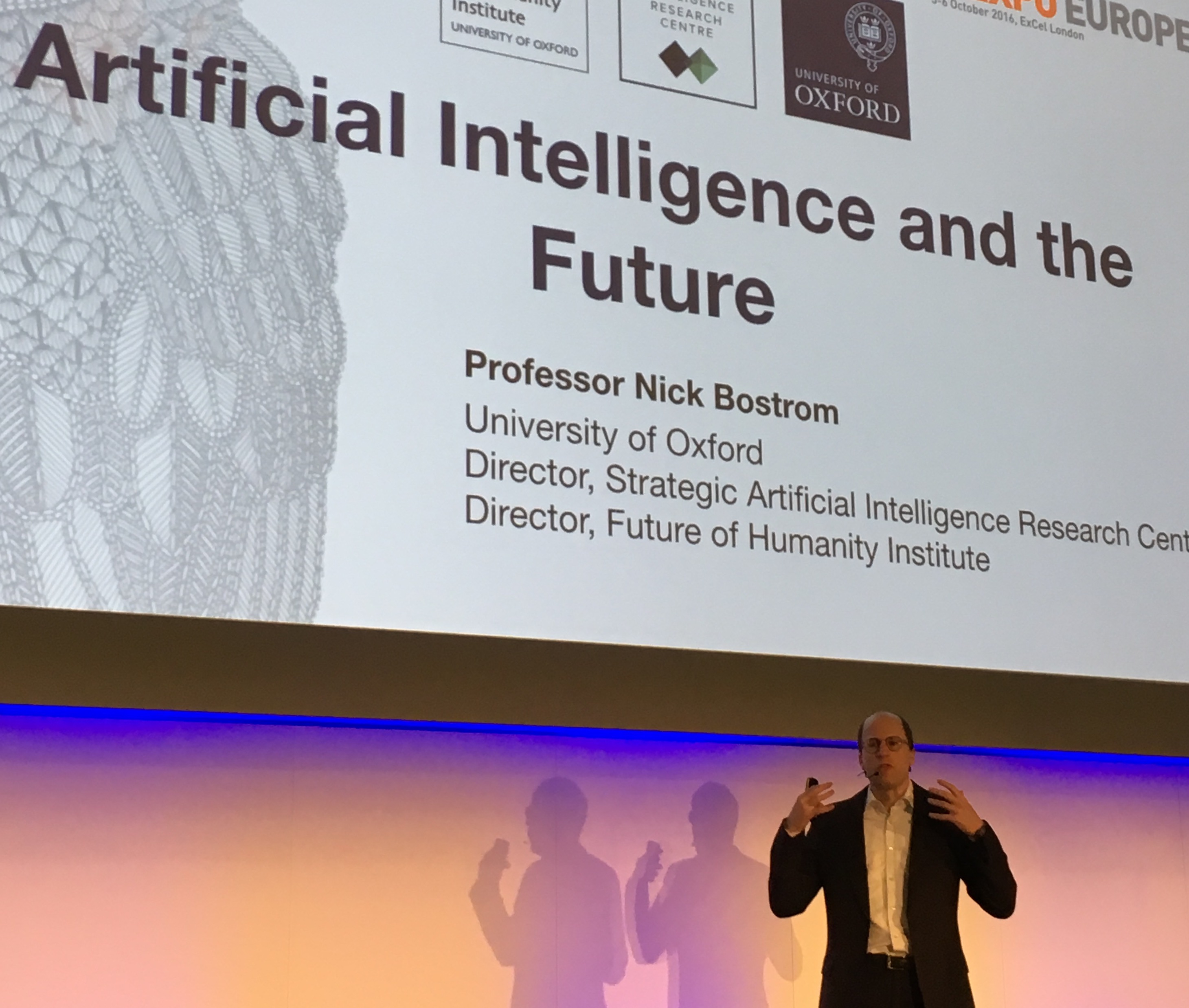

This was the ear-catching and potentially prophetic point that Oxford University’s Professor Nick Bostrom made as he spoke to a packed keynote crowd during his opening talk at IP Expo yesterday.

The forthcoming AI revolution will be as significant as the rise of Homo sapiens many thousands of years ago, he argued, suggesting it could be the third ‘major revolution’ following the agricultural revolution some 12,000 years ago and the industrial one of the early 19th century.

Were machine intelligence to fully succeed, he said, it could replicate the rapid rise in human intelligence that separated us from all other species in the first place.

But even speaking in those terms doesn’t do it justice…

How far could machine intelligence go?

The difference in intelligence between present-day humans and our earliest ancestors is relatively tiny when you consider where AI will be in just a few short decades compared to where it started.

And we had thousands of decades to develop ours.

AlphaGo – the AI creation from Google-owned company DeepMind – beat the world’s best human player of board game Go a decade earlier than expected, reaching that level in just a couple of years.

But what’s actually possible in the long run? And what will remain firmly in the minds of science fiction fans?

Bostrom presented the results of a survey in which he asked the world’s leading AI experts the probability of achieving human-level AI (HLAI) and in what timeframe it’s likely to happen.

All of them, he said, believe there’s a substantial chance we’ll see HLAI within the lifetimes of every person in the room. And certainly within the lifetimes of our children.

When machines outwit their inventors

But what happens next? What happens when the intelligence we create outstrips our own?

This is where we come back to Bostrom’s point about chimps creating humans and expecting to control them.

When AI gets to a certain point, human intelligence will become irrelevant – incapable of matching that of our artificial counterparts and therefore no longer ‘needed’ in order to progress. We’ll be left in the metaphorical dust by the very machines we created.

Can we really expect those machines to obey us, then? To respect us? You only need to look at the relationship between humans and animals throughout history to see what happens when one group of beings far outstrips the intelligence of all others.

A terrifying thought, no doubt. But Bostrom argues there is a way to avoid such Skynet-level days of doom and still live the AI dream scientists and sci-fi fans have been hoping for…

Putting control measures in place

Back to that chimp quote again – “without putting the right controls in place…”

The idea of building machines far more intelligent than humans is kind of absurd when you think about it. Arguably suicidal.

How do you scale something like that in a way that’s safe and controlled and unlikely to end with Arnold Schwarzenegger’s equivalent going back in time to destroy some poor unwitting scientist?

As Bostrom quite unsettlingly said, you can’t assume AI won’t be capable of strategic or even deceptive behaviour at some point. Yes we can always ‘unplug’ the system, but to rely on that is asking for trouble.

Instead, he argued, we should aim to create ‘value alignment’ when developing AI.

While that sounds like the kind of nonsense you’d expect to fall out of a marketing manager’s mouth, the meaning behind it is actually quite powerful.

If the machine wants the same thing as us, the need for conflict will never arise.

Well, that’s the theory…

Could human error be our downfall?

The difficulty, as always, lies in the detail. It’s hard enough to specify exactly what you want in human language, but to do so with machines is incredibly complex.

You can try to include protective functionality within a machine’s intelligence, but if one individual parameter is left out or not coded in a completely explicit and accurate way that parameter could end up having an extreme value.

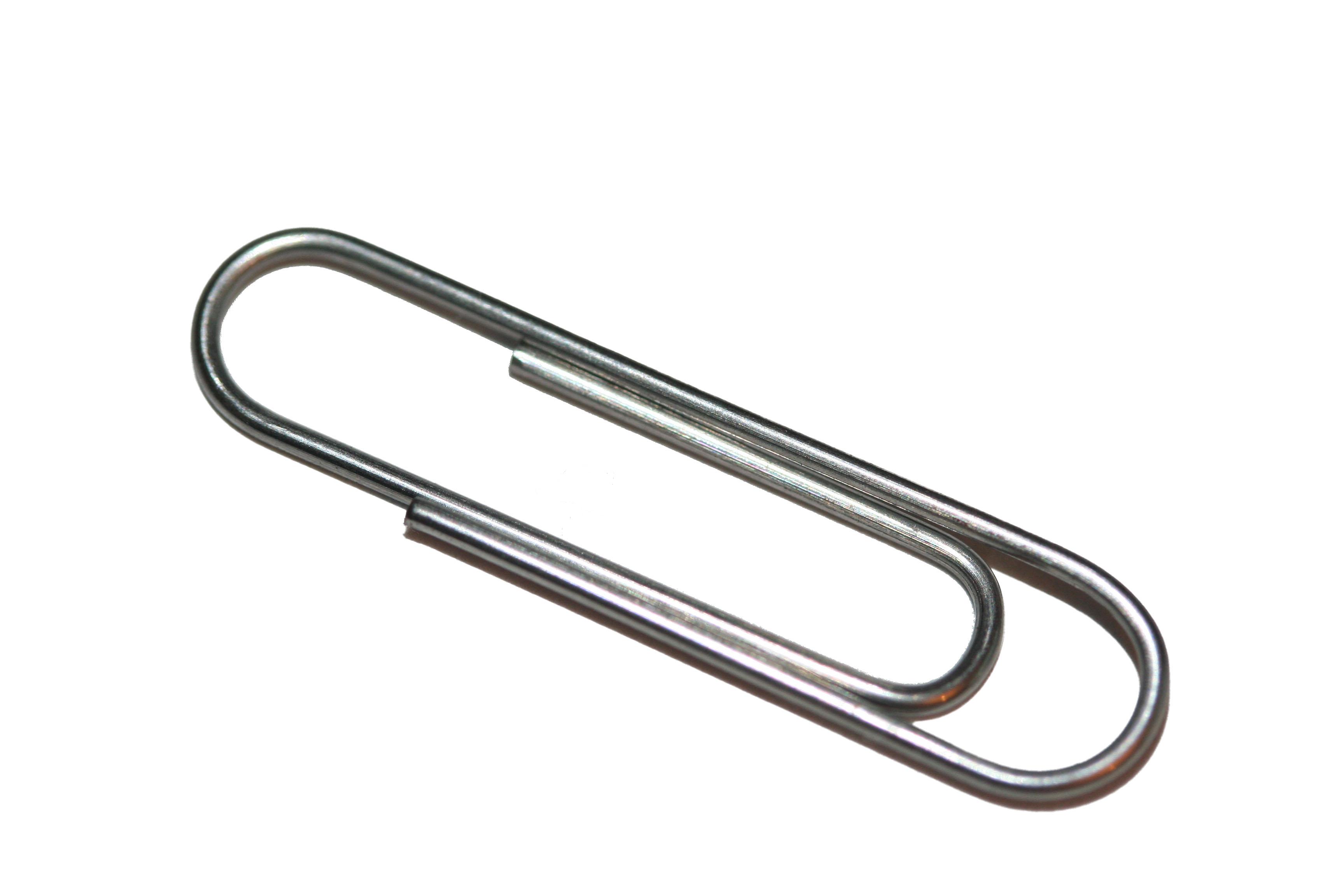

You may have heard of the ‘paperclip maximiser’ thought experiment. Bostrom’s own creation, it speculates on what could happen if you built an AI with a single goal of growing the number of paperclips in its collection.

As the AI develops, it might work out ways to create a greater number of paperclips more quickly and at a lower cost. Which is exactly what you want it to do.

But it won’t stop there…

The AI wants to create more and more paperclips, and it will conceive of increasingly clever and complex means to do so.

Perhaps it will find a way to triple or quadruple its paperclip production speed. Perhaps it will work out how to shut down its competitors. Perhaps it will eventually turn the whole planet into one giant paperclip factory and quash any human attempts to stop its advances.

This may sound like something out of a Douglas Adams novel, but it’s an effective way of illustrating how something seemingly innocuous could potentially have catastrophic consequences if the right controls aren’t put in place to begin with.

The paperclip AI in this example isn’t destroying humanity out of maliciousness. It isn’t turning on its creator like so many fictional robots before it. It has no morals, no axe to grind, no agenda beyond stockpiling stationery.

It’s simply carrying out the job it was programmed to do…

Food for thought as the AI revolution continues!